This blog is a co-authored by Jeff Bollinger & Gavin Reid

When Charles Darwin stated"Ignorance more frequently begets confidence than does knowledge," civilization's evolution from Industrial Age to Information Age was nearly a century away. Yet, when it comes to many aspects of IT, he nailed it. In today's world, a measured, consistent, and creative approach to incident response and security monitoring delivers the most effective and efficient results for your organization. This blended approach makes human analysts the most critical component of any security operations center (SOC). SOCs utilize security analysts of varying skill and experience levels, so maintaining a consistent level of response can be difficult. Plus, cognitive biases can arise throughout any type of analysis or investigation which can lead to false conclusions or other errors. So if your goal is to understand the source, root cause, and impact of a threat (security incident), the analysts you rely on must be able to understand and avoid cognitive biases in their work.

A common cognitive bias exhibited by human analysts, something known as the Dunning-Kruger (DK) effect, might sneak in and create problems with accuracy and consistency. DK is a cognitive bias which suggests that relatively unskilled people may suffer an illusion of superiority and believe that their abilities are much higher than they really are. This is caused by their inability to recognize their own limits and accurately evaluate their own abilities.

"When researchers Dunning and Kruger asked participants to perform specific tasks (such as solving logic problems, analyzing grammar questions and determining whether jokes were funny), they took it one step further. After completing the task, they asked the participants to evaluate their own perceived performance in comparison to other participants.

Dunning and Kruger then divided the results into four groups, depending on actual performance, and found that all four felt their performance was above average. This meant that the lowest-scoring group (the bottom 25%) showed a very large illusory superiority bias. The two researchers attributed this to the fact that the individuals who were worst at performing the tasks were also worst at recognizing the skill needed to perform the task. This was later supported by the fact that, given training, the worst subjects improved their estimate of their rank as well as getting better at the tasks." [1]

Falling victim to the DK effect isn't always a sign of lacking intelligence. In the case of incident response, it could simply suggest a cognitive bias based on the analyst being over-confident, when in fact they are just unprepared. As a result they think they are capable of completing an investigation when in reality they are lacking all the resources and knowledge needed to make the right observations. Basically, "not knowing what you do not know" may be the challenge many incident response teams need to overcome, rather than an outside influence. So, is there a way your team can be sure they are fully prepared and informed, and not suffering from the DK effect?

Fortunately, yes -there is. It's always been easy for incident responders to jump to conclusions based on the first piece of relevant data they discover. After all, analyst are humans too and want to quickly solve security problems. But when combined with a poor understanding of their organization's own capabilities, this can lead analysts to jump to wrong, or incomplete conclusions. This is an expected behavior so be sure to plan for it. No matter how hard you try, you can't make all people the same -and you really wouldn't want to. Therefore, it is extremely important to have a well thought out and documented playbook to ensure a consistent approach -regardless of skill-level. Remember that no analysts will behave the same way on a consistent basis. So be sure to include that aspect in your SOC playbook so that you and your team will develop a realistic understanding and approach to investigation and documented response.

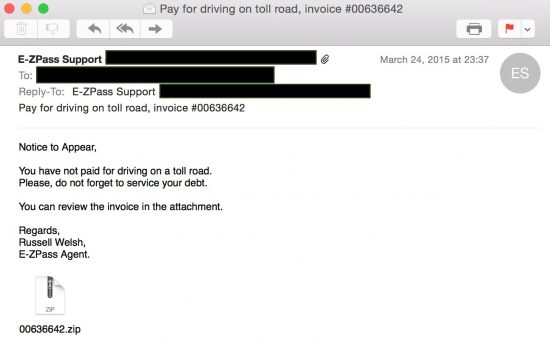

Let's take a quick look at a scenario to help illustrate this:

Up to this point, a solid analysis approach has been chosen and executed, but can you be certain that the problem is resolved? Shouldn't your analysts also be asking:

Now suppose a few weeks later a similar incident is detected when a separate host attempts to resolve a domain redirected to your RPZ sinkhole:

As we see, the original issue resurfaced because Analyst#1 fell victim to the DK effect. Their confidence in the output, based on the information they processed, was too high. Plus, their lack of experience in thorough investigations led them to believe they had enough information to be confident in their findings. Fortunately, Analyst#2's more thorough analysis revealed additional indicators and led to a better overall response. In keeping with the Dunning-Kruger findings, Analyst#2 indicated that more analysis of host based indicators was necessary to determine the true impacts to infected hosts. Basically, the seasoned analyst, prepared by experience, did not feel confident the analysis was complete, while the junior analyst, less prepared, became confident too early in the process.

Quality measurements can be subjective, but there may be quantitative ways to compare the work of analysts. Decision trees, flow charts and other organizational aids can all help increase consistency in the response approach for all analysts. Thorough documentation that provides clear and direct instructions on how to perform analysis tasks will also help your organization dump the Dunning-Kruger effect. But in the end, it is a step-by-step methodology outlining the investigative process, one that serves to standardize approaches while introducing checks and balances, that will help your organization deploy the right approach in the right situation. By doing so, you can prepare your analysts for the increasing threats that lie ahead and prevent "over-confidence" from destroying your organization's confidence in their incident response.

[1] https://en.wikipedia.org/wiki/Illusory_superiority#cite_note-unskilled-8

Hot Tags :

Security Operations Center (SOC)

CSIRT

best practices

PubSec

Hot Tags :

Security Operations Center (SOC)

CSIRT

best practices

PubSec