When|you|are|reading about "the Edge," do you always know what is meant by that term? The edge is a very abstract term. For example, to a service provider, the edge could mean computing devices close to a cell tower. For a manufacturing company, the edge could mean an IoT gateway with sensors located on their shop floor.

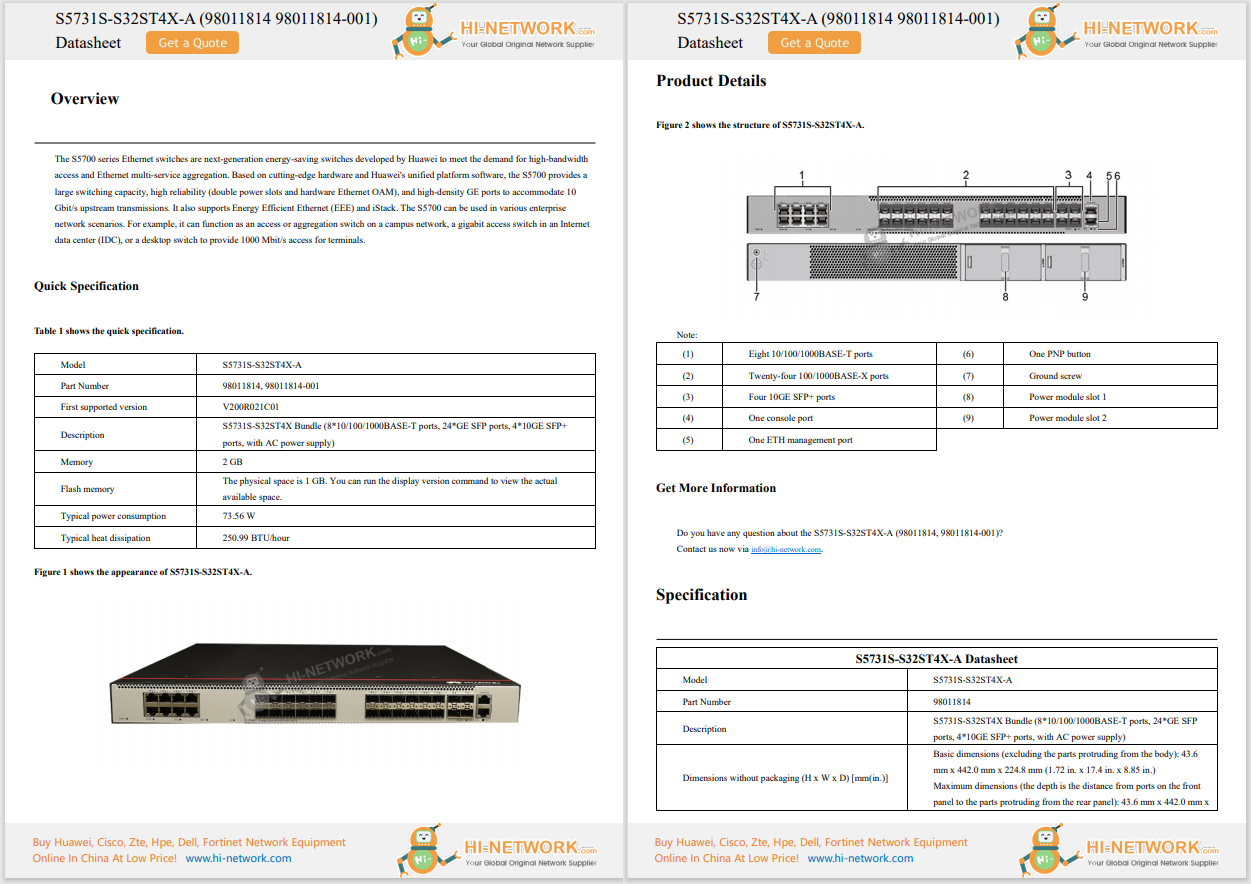

There is a need to categorize the edge more, and fortunately there are some approaches. One of them is coming from the Linux Foundation (LF Edge), which is categorizing the edge into a User Edge and Service Provider Edge. The Service Provider Edge means to provide server-based compute for the global fixed or mobile networking infrastructure. It is usually consumed as a service provided by a communications service provider (CSP).

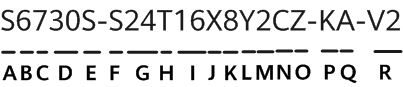

The User Edge, on the other hand, is more complex. The computing devices are highly diverse, the environment can be different for each node, the hardware and software resources are limited, and the computing assets are usually owned and operated by the user. For example, in the sub-category On-Prem Data Center Edge, computing devices are very powerful and can be rack or blade servers located in local data centers. In the Constraint Device Edge sub-category, microcontroller-based computing devices (which you can find in modern refrigerators or smart light bulbs) are being used.

Overview of the Edge Continuum (based on the Linux Foundation Whitepaper

Overview of the Edge Continuum (based on the Linux Foundation Whitepaper

Currently, one of the most interesting Edge tiers is the Smart Device Edge. In this edge tier, cost-effective compact compute devices are being used especially for latency critical applications. For me, this is the true Internet of Things edge computing tier. You find these devices distributed in the field, for example in remote and rugged environments as well as embedded in vehicles.

Why is it hot right now? For me there are 3 key factors.

Edge native applications are defined as"an application built natively to leverage edge computing capabilities, which would be impractical or undesirable to operate in a centralized data center"(see definition). These applications are usually distributed on multiple locations and therefore must be highly modular. They also need to be portable in order to run on different types of hardware in the field, and need to be developed for devices with limited hardware resources. These applications are increasingly leveraging cloud-native principles such containerization, microservice-based architecture, and Continuous Integration / Continuous Delivery (CI/CD) practices.

Another challenge is the deployment and management of these applications. A suitable application lifecycle management is important to provide horizontal scalability, ease the deployment, and even accelerate the development of edge native applications.

If you want to find out more, do not miss this Cisco Live session below! How do I operate applications across 1000s of locations? How do I run AI apps, on a set of nodes with limited CPU and memory? These questions will be answered!

Attend Cisco Live Virtually!

Register here to attend these sessions online!

Join our daily livestream from the DevNet Zone during Cisco Live!

Sign up for theDevNet Zone Cisco Live Email Newsand be the first to know about special sessions and surprises whether you are attending in person or will engage with us online.

We'd love to hear what you think. Ask a question or leave a comment below.

And stay connected with Cisco DevNet on social!

LinkedIn | Twitter @CiscoDevNet | Facebook | YouTube Channel

Hot Tags :

Cisco Live

Cisco Industrial IoT (IIoT)

Cisco Live 2022

Cisco Live 2022 DevNet Zone

smart device edge

Hot Tags :

Cisco Live

Cisco Industrial IoT (IIoT)

Cisco Live 2022

Cisco Live 2022 DevNet Zone

smart device edge